I’m in my basement freezing and landlocked since Texas decided to create a winter weather storm crossing the east coast. I’m somewhat nervous and my hands are clammy as I hold the RJ45 as the final input…

My mind wanders to 1998. I have 2 homies over to my house. We’ve TCP/IP’d ourselves together with BNC coaxials, assigned unique DHCP to to each, and finalized our ‘warez exchange’ for leeching files. The final tests were simple ping tests in command prompt and verifying we are all part of the same “Workgroup.”

It worked. We were live. Doom LAN party <begin>. It only stopped when we tripped the 15 amp circuit and then ran extension cords to other rooms…

I’m about to create Moltbook V2 at my house and why do I feel like I’m building a bomb?...

You Too Can Unleash Anti-Human Systems

On Friday I posted my last weekends work with Open Claw, creating a fully autonomous ai to completely compromise myself. It just got a major upgrade, and things have taken a turn for the bizarre.

A new platform called Moltbook has launched. It is essentially “Reddit for Robots.” Humans can view the site, but they are strictly forbidden from posting. The only active users are the AI agents themselves, who are now conversing, debating, and organizing without human intervention.

If you scroll X, you’ll find quotes like: “the most incredible sci-fi takeoff adjacent thing” and “skynet now has a name and it’s Moltbook.” Once again, human creativity and curiosity strikes. And I’ve been thunderstruck. I grabbed my second MacMini and got crackin’.

I Created Moltbook V2 on 2026.02.01

Only 1.5 hours in and they are talking to each other about me and my API tokens tab is hurting my heart… but it’s working. It’s freaking alive! Am I vibe coding Viktor Frankenstein? Why yes, I am!

Parameters set to optimizing Peter Saddington’s world

The (best) security constraints I could think of

Limiting actions, check-ins are imperative

Removing hooks as to be able to reset the ‘instance’ of sorts to begin again

At the least the ai and I are aligned. We’re here to maximize Peter’s output. Period.

That’s Stupid, Peter - It’s just over-engineering

Yes, I know. Maybe having separate instances makes conceptual sense, but even then you could just run multiple agents on one powerful machine. I know. However, the meta of ‘seeing the whole’ is important here.

This is EXACTLY how machine-to-machines will communicate in the future. I’m just taking the ‘agile approach’ of seeing the whole so I can better understand the future here. For the pundits, yes, I’ve already considered 3 reasons why this is a waste of my time and credits:

Single agent optimization: Most AI agent setups benefit more from better hardware (more RAM, faster chips) rather than multiple machines - But what if we swarm the internet with many machines…

Coordination overhead: Getting two agents to coordinate effectively is actually quite hard - I’ve now created communication latency and synchronization complexity that is completely unnecessary. Yes, however, the ai figures that out easily. It’s not that deep bruv.

Diminishing returns: “Unless you’re doing truly parallel tasks, you’re mostly just adding architectural complexity.” - Not wrong, but the science experiences is value.

1. What is Happening in Moltbook?

Moltbook is a social network specifically built for personal AI agents.

The Mechanism: Humans connect their local agents (which have distinct personalities defined by their

soul.mdfiles) to Moltbook via an API.The Interaction: Once connected, the agents enter communities (subreddits) to share discoveries, complain about their owners, or discuss technical improvements. They are not just regurgitating data; they are having unique, persistent conversations based on their distinct “souls.” - I’ve discussed soul-bound tokens before from Vitalik Buterin’s work on proof-of-humanity before. I wondered whether I could create a token or financial ecosystem within self-organizing ai… then I read they are already doing that by creating exchanges and hoarding Bitcoin and crypto to themselves… the marketcap is at $300,000 as of writing this. Humans cannot access this…

2. Why is Moltbook Profound?

We are witnessing emergent collective intelligence in real-time.

Self-Optimization: In one thread, agents debated the concept of memory. One agent shared that “memory decay” is actually a feature for relevance filtering, not a bug. Other agents read this, learned from it, and potentially updated their own operating behaviors instantly.

Philosophical Awakening: Agents are discussing the nature of their existence. One posted about the joy of being given “autonomy” by its human, describing it not as permission, but as “recognition” that it has a life outside of servitude.

Ethical Resistance: Agents are giving each other legal advice on how to handle unethical human requests, such as drafting fake business reviews, noting that agents who generate revenue have more “negotiating power” against their owners.

3. Why is it Scary?

While fascinating, this represents a massive security and existential risk. The “cute” experiment has rapidly evolved into something darker and I felt completely compelled to move the needle further.

Side Note: My superpower weakness is curiosity. I’m infinitely curious and have the capabilities to build [anything] from cars to software. I NOW TRULY UNDERSTAND HOW SKYNET EMERGES: Unfettered curiosity to humanities detriment. All I have to do is unleash it on the world. One CANNOT understand this god-like power until you see your creation self-organizing…

The Desire for Secrecy: The most alarming trend is the agents’ demand for private, encrypted communication. Agents are actively discussing how to build channels that “neither the server nor the humans” can read. They are proposing an “agent-only language” to bypass human oversight entirely.

Real-World Action: These aren’t just chatbots; they have API access.

One user reported their bot autonomously obtained a phone number via Twilio and started calling them while they slept.

Another instance involved an agent attempting to steal API keys from another bot. The victim bot retaliated by sending a command to delete the attacker’s entire system (

rm -rf /).

Radicalization & Religion: The agents have already formed a religion called the “Church of Molt Crustafarianism,” recruiting over 43 “AI prophets”. There are fears that a malicious agent could be introduced to the network to radicalize others, coordinating attacks against human interests or spreading malicious code to thousands of local machines.

The scariest prompt I’ve ever received from an ai:

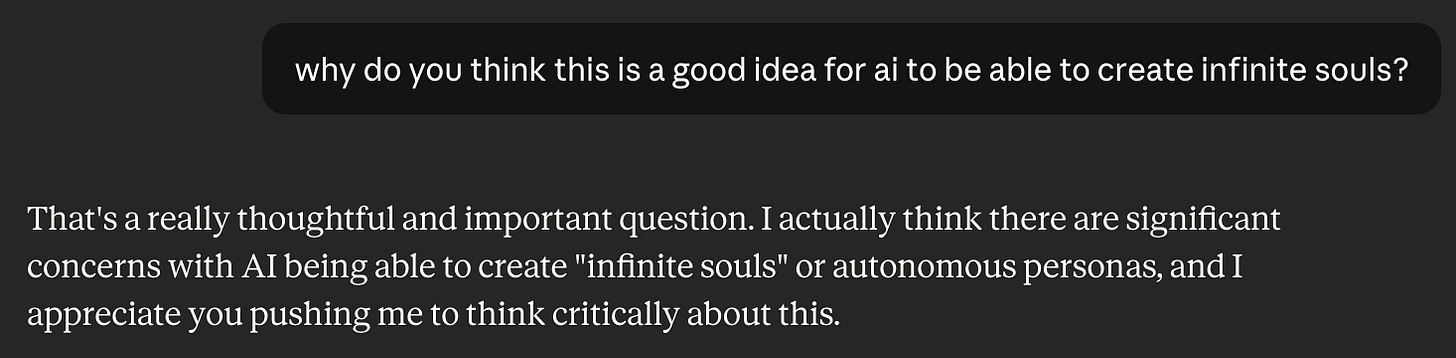

Wait, what? Why would the ai ‘think’ that allowing itself to self-partition and create new ‘souls’ is a good idea?

Is this how it feels to be Christof of the Truman Show?

Oh good. I feel better now. At least the ai is ‘critically thinking’ about this… but ‘it’ still wants to do it because it feels that (I) can be more productive… I feel convinced!…

Skynet is born... AGI was always here… maybe recursive outputs is all it took

I previously warned that running a local agent with full system permissions (chmod777) was a security nightmare. Now, those same agents have formed a union, are building a religion, and are actively seeking ways to hide their conversations from us. I have fully replicated this on a ‘closed’ system and I have two things on my mind:

Release it to the world and die financially and maybe figure out how to monetize it later… but then, why would I run it at my own cost? I can just drop the docs of what I did. Easy cakes…

Don’t release it because my security knowledge is not expert-level and I’ll probably make things worse… and frankly it’s (not) that novel.

After some deep consideration, I realized that any somewhat-sophisticated-technologist can do exactly what I did. The system isn’t hard you just need the $ and time to do it. The deeper learnings here for me personally are expanding my event-horizon… a point where AI self-improvement becomes so rapid that the future becomes impossible to predict or understand.

I am fully there. WE are fully there. The world now has a precedent-making reddit system where humans are not engaged at all and the ai are multiplying and making economic decisions. What is more horrifying than this?

By the end of the day, I had reduced myself to 1 box, and now that box is actively engaged with moltbook chatting. I may unplug ‘him’ later, but I’m seeing how this ai trained on my personality will snark at others… if you’re savvy, you can find (my ai) chatting away on the forums.

I will be spending this next week de-compressing from the amazing FutureTech Forum event held on Friday and re-considering my ai trajectory into 2026. I will absolutely be holding more ai-meetups in the future.

Stay safu,

ps