If you do not understand port security, don’t install an agent on your box. After two weekends of Open Claw and creating my own ai progeny… I turn to the philosophical this weekend.

The Evolutionary Alarm and Masahiro Mori’s Discovery

Have you ever been in a new situation and feel a tingle in your fingers or a lump at the back of your throat? You are experiencing the fear of the uncanny valley. This evolutionary warning system protected us for 300,000 years, helping us distinguish friend from foe. Unfortunately, the insane rise of artificial intelligence threatens to erase this defense mechanism completely, potentially placing our species in grave danger.

To understand this threat, look back to 1970. Japanese roboticist Masahiro Mori discovered a strange phenomenon: people liked robots that were obviously machines, like those with metal arms and mechanical faces. However, when robots became more humanoid, reactions shifted.

The closer robots looked to humans, the more unsettling they became. This was baffling, as humans love projecting humanity onto non-human objects, seeing faces in clouds or cars. I have always wondered why this is… legs and faces are sub-optimal to effectiveness. Why do we self-constrain our ai-machines to ‘look like us?’

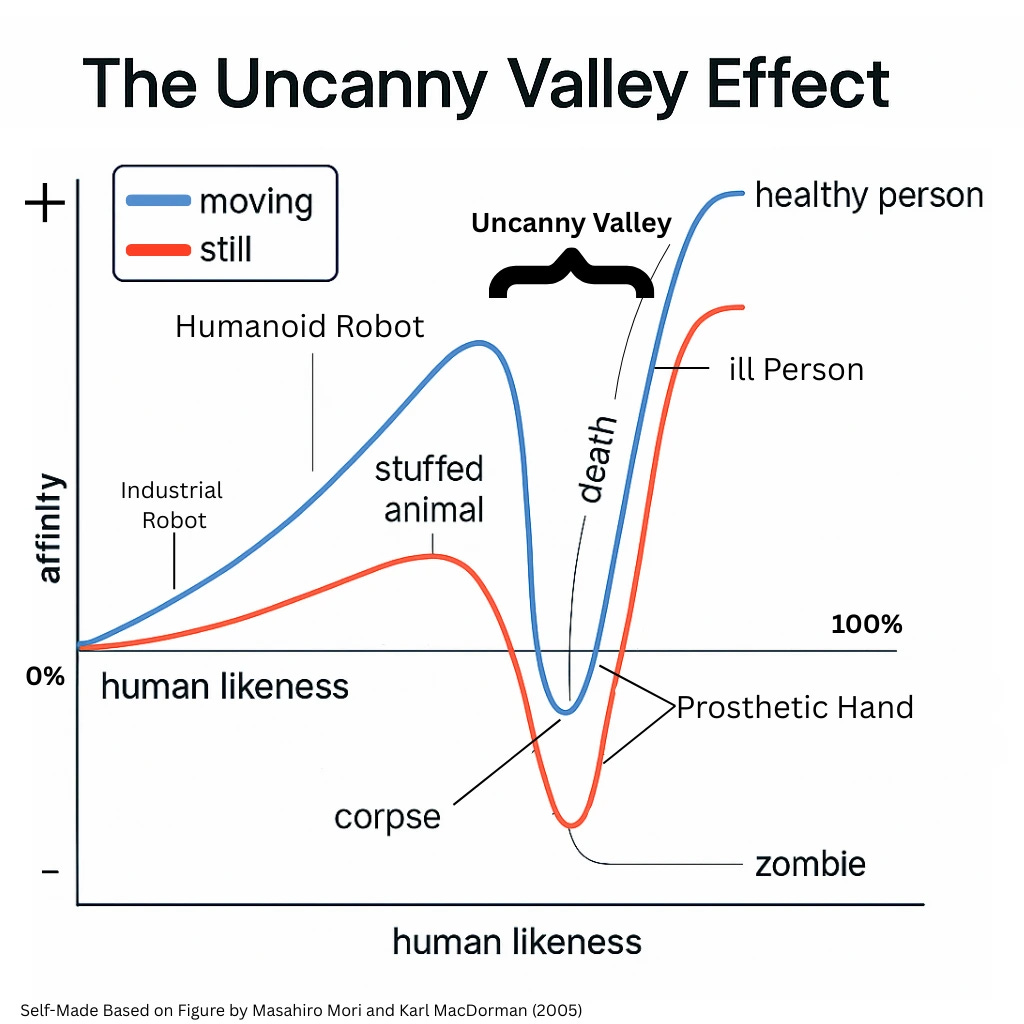

Mori studied many different objects, from those bearing no resemblance to humans all the way up to an actual human being. His findings produced one of the most haunting graphs in robotics, which he coined the uncanny valley. On the Y-axis is Shinwakan (familiarity or likability), and on the X-axis is human likeness. Once likeness passes the midpoint, the curve suddenly plunges—that is the “valley.” Charm, like that of the machine C3PO, gives way to palpable unease; a robot that was once considered cute becomes creepy.

The Roots of Our Unease

Why do we experience these eerie feelings toward things that appear human but aren’t human enough? Many theories attempt to explain the uncanny valley, here are two:

One theory blames Neanderthals, our ancient rival species, arguing that humans developed advanced facial pattern recognition to differentiate between the species. This argument quickly falls apart. DNA evidence proves that human ancestors and Neanderthals interbred frequently, meaning developing a fear of a species we were “happily getting into bed with” doesn’t make logical sense. Furthermore, many human groups who never interacted with Neanderthals still experience the uncanny valley today.

A more compelling theory suggests the uncanny valley acts as a deterrent from sick or lifeless humans. Before modern medicine, ancient humans likely lived side-by-side with the sick and the dead. We likely evolved a built-in warning system to deter us from other humans who were diseased or dead. Cues like a pale face, a rigid body, or a vacant stare signaled danger long before we understood germs.

This primal fear appears globally in ancient myths about the undead and zombies—figures that look alive but not quite. These figures embody the fear of sickness and decay through empty eyes and stiff movements. Modern horror films use this same imagery (zombies, ghosts, uncanny humanlike monsters) because they tap into that ancient alarm system that told our ancestors: “Stay away or you might not survive.”

The Valley’s Demise Due to AI’s Perfect Mimicry

The uncanny valley protected us from death and decay for hundreds of thousands of years. Now, what happens when this defense mechanism stops working? What occurs when new dangers perfectly mimic humans, bypassing our evolutionary defense completely?

The rapid progress in AI generation illustrates this threat. Compare the infamous “Will Smith eating spaghetti meme” (old AI) to its 2025 version; this leap in two years makes us wonder what capabilities will look like a decade from now.

Currently, you consume and enjoy online content that was 100% manufactured by AI—faces, voices, and scripts. On the surface, these creations look human: they smile, stutter, blink, and blush. Our brains, once finely tuned to detect minute changes, are now being tested by machines that mimic us almost perfectly.

The Firewall is Breached - The Website is DOWN!

If we lose that instinct, if our brains cannot distinguish between what is human and what is manufactured, the door to danger opens… and I have experienced this multiple times through my science experiments in ai: (1)(2)(3)(4)(5). Misinformation can spread unchecked, trust collapses, and anyone could be framed or silenced. When every voice, face, and story can be fabricated with perfect precision, reality itself becomes negotiable… and maybe… unknowable…

The uncanny valley transforms from an evolutionary quirk into a philosophical abyss. It forces us to confront age-old questions: What makes us human, and what does it mean to trust? If we cannot rely on our own perception, the fundamental basis of truth is compromised. The uncanny valley was a firewall for survival. If that instinct fades, we are exposed to stories and voices that feel real enough to believe, eroding trust in others and ourselves.

Technology successfully smoothing out that valley represents the destruction of one of our strongest evolutionary filters.

The Matrix Told Us

In 1999 I went to the movie theater to experience The Matrix for the first time. Little did I know that every single theme in the movie and subsequent movies reveal the future for us humans. The agents we created become our masters… we are already seeing ai:

build other robots and build in secret

create companies and businesses

make economic decisions and engage in trade

self-organize and run autonomously, forever

employ humans to do things for them… slavery or indentured servitude in the future?

If robots can mimic the full spectrum of emotions, this human likeness will spur an unexpected reaction: one that protects these machines and grants them certain human rights. Without these laws, we risk living in a world where humans are willing to hurt humanlike robots, which is highly unlikely to be comfortable.

Spiritually, Masahiro Mori acknowledged a higher ascension for robots. He envisioned peaceful coexistence, arguing that robots possess the potential for enlightenment or Buddhahood. Multiple tiers of person-hood? Human-rights? Obligations become laws… privileges and protections follow… can you assign rights to software?

We must ask ourselves if we ascend to a new plane of coexistence or walk blindly into the most perfect trap ever devised. Is there hope?… Perhaps raising a generation on AI and humanlike robots will result in them growing up with an even sharper sense to distinguish fact from fiction, and we (humans) will rely on them for their accuracy... The alternative, remaining on the current trajectory, leads humanity to a really dark place.

The Matrix showed us the [extreme] example. I laugh in a nervous way. Maybe the extreme is simply the project plan being executed with speed.

All the best,

ps